Building a Vanilla JS Application

Building a Vanilla JavaScript Application

In this tutorial, you’ll build a simple web application that detects objects in images using Transformers.js! To follow along, all you need is a code editor, a browser, and a simple server (e.g., VS Code Live Server).

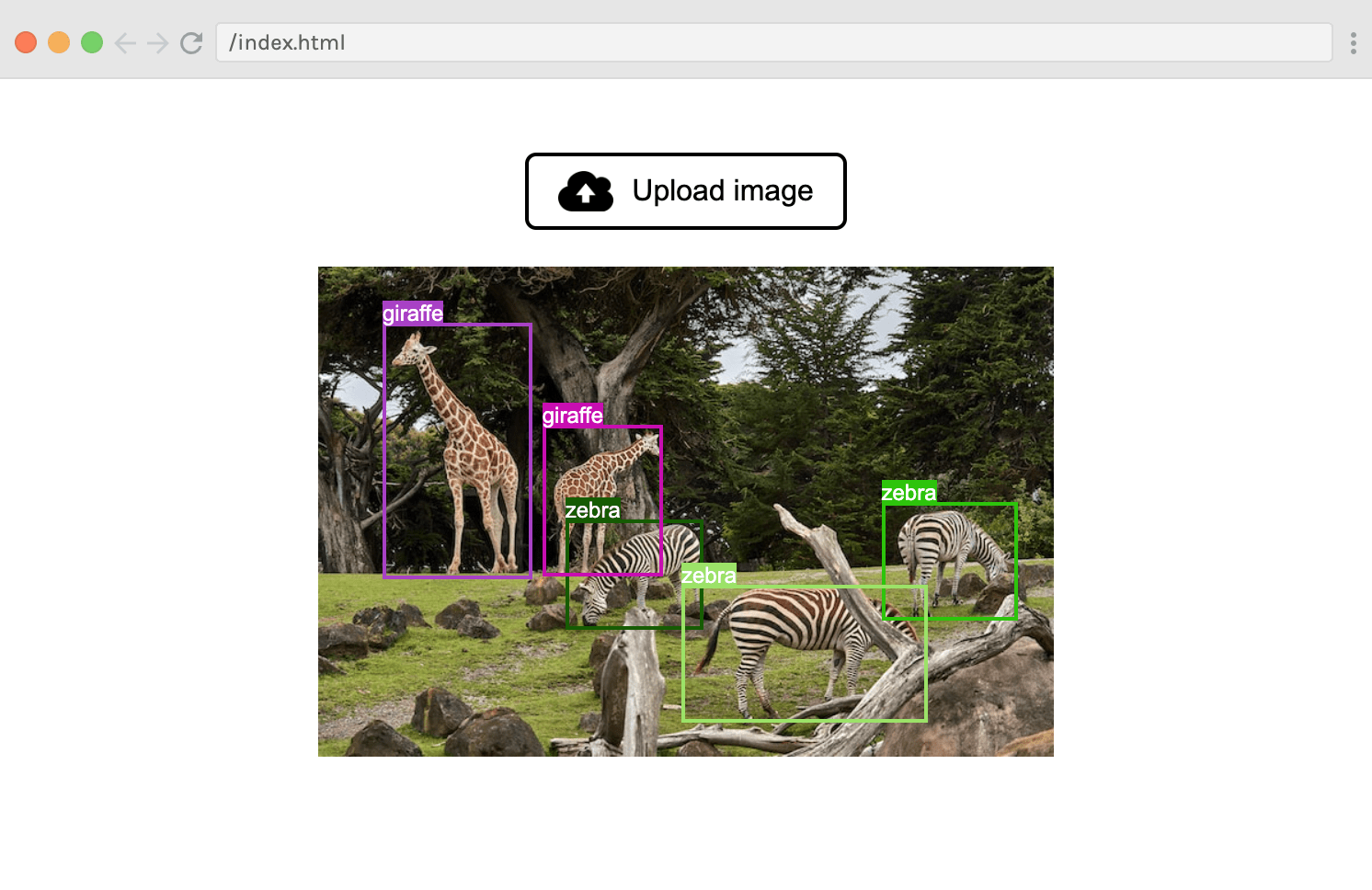

Here’s how it works: the user clicks “Upload image” and selects an image using an input dialog. After analysing the image with an object detection model, the predicted bounding boxes are overlaid on top of the image, like this:

Useful links:

Step 1: HTML and CSS setup

Before we start building with Transformers.js, we first need to lay the groundwork with some markup and styling. Create an index.html file with a basic HTML skeleton, and add the following <main> tag to the <body>:

Copied

Next, add the following CSS rules in a style.css file and and link it to the HTML:

Copied

Here’s how the UI looks at this point:

Step 2: JavaScript setup

With the boring part out of the way, let’s start writing some JavaScript code! Create a file called index.js and link to it in index.html by adding the following to the end of the <body>:

Copied

The type="module" attribute is important, as it turns our file into a JavaScript module, meaning that we’ll be able to use imports and exports.

Moving into index.js, let’s import Transformers.js by adding the following line to the top of the file:

Copied

Since we will be downloading the model from the BOINC AI Hub, we can skip the local model check by setting:

Copied

Next, let’s create references to the various DOM elements we will access later:

Copied

Step 3: Create an object detection pipeline

We’re finally ready to create our object detection pipeline! As a reminder, a pipeline. is a high-level interface provided by the library to perform a specific task. In our case, we will instantiate an object detection pipeline with the pipeline() helper function.

Since this can take some time (especially the first time when we have to download the ~40MB model), we first update the status paragraph so that the user knows that we’re about to load the model.

Copied

To keep this tutorial simple, we’ll be loading and running the model in the main (UI) thread. This is not recommended for production applications, since the UI will freeze when we’re performing these actions. This is because JavaScript is a single-threaded language. To overcome this, you can use a web worker to download and run the model in the background. However, we’re not going to do cover that in this tutorial…

We can now call the pipeline() function that we imported at the top of our file, to create our object detection pipeline:

Copied

We’re passing two arguments into the pipeline() function: (1) task and (2) model.

The first tells Transformers.js what kind of task we want to perform. In our case, that is

object-detection, but there are many other tasks that the library supports, includingtext-generation,sentiment-analysis,summarization, orautomatic-speech-recognition. See here for the full list.The second argument specifies which model we would like to use to solve the given task. We will use

Xenova/detr-resnet-50, as it is a relatively small (~40MB) but powerful model for detecting objects in an image.

Once the function returns, we’ll tell the user that the app is ready to be used.

Copied

Step 4: Create the image uploader

The next step is to support uploading/selection of images. To achieve this, we will listen for “change” events from the fileUpload element. In the callback function, we use a FileReader() to read the contents of the image if one is selected (and nothing otherwise).

Copied

Once the image has been loaded into the browser, the reader.onload callback function will be invoked. In it, we append the new <img> element to the imageContainer to be displayed to the user.

Don’t worry about the detect(image) function call (which is commented out) - we will explain it later! For now, try to run the app and upload an image to the browser. You should see your image displayed under the button like this:

Step 5: Run the model

We’re finally ready to start interacting with Transformers.js! Let’s uncomment the detect(image) function call from the snippet above. Then we’ll define the function itself:

Copied

NOTE: The detect function needs to be asynchronous, since we’ll await the result of the the model.

Once we’ve updated the status to “Analysing”, we’re ready to perform inference, which simply means to run the model with some data. This is done via the detector() function that was returned from pipeline(). The first argument we’re passing is the image data (img.src).

The second argument is an options object:

We set the

thresholdproperty to0.5. This means that we want the model to be at least 50% confident before claiming it has detected an object in the image. The lower the threshold, the more objects it’ll detect (but may misidentify objects); the higher the threshold, the fewer objects it’ll detect (but may miss objects in the scene).We also specify

percentage: true, which means that we want the bounding box for the objects to be returned as percentages (instead of pixels).

If you now try to run the app and upload an image, you should see the following output logged to the console:

In the example above, we uploaded an image of two elephants, so the output variable holds an array with two objects, each containing a label (the string “elephant”), a score (indicating the model’s confidence in its prediction) and a box object (representing the bounding box of the detected entity).

Step 6: Render the boxes

The final step is to display the box coordinates as rectangles around each of the elephants.

At the end of our detect() function, we’ll run the renderBox function on each object in the output array, using .forEach().

Copied

Here’s the code for the renderBox() function with comments to help you understand what’s going on:

Copied

The bounding box and label span also need some styling, so add the following to the style.css file:

Copied

And that’s it!

You’ve now built your own fully-functional AI application that detects objects in images, which runns completely in your browser: no external server, APIs, or build tools. Pretty cool! 🥳

The app is live at the following URL: https://boincai.com/spaces/Scrimba/vanilla-js-object-detector

Last updated